Reference-to-Video (R2V) — AI Product Video from Photos

Upload a product photo and a model reference image. OmniShow holds color, texture, and shape consistent across every frame — no drift, no distortion, no 3D setup.

Product

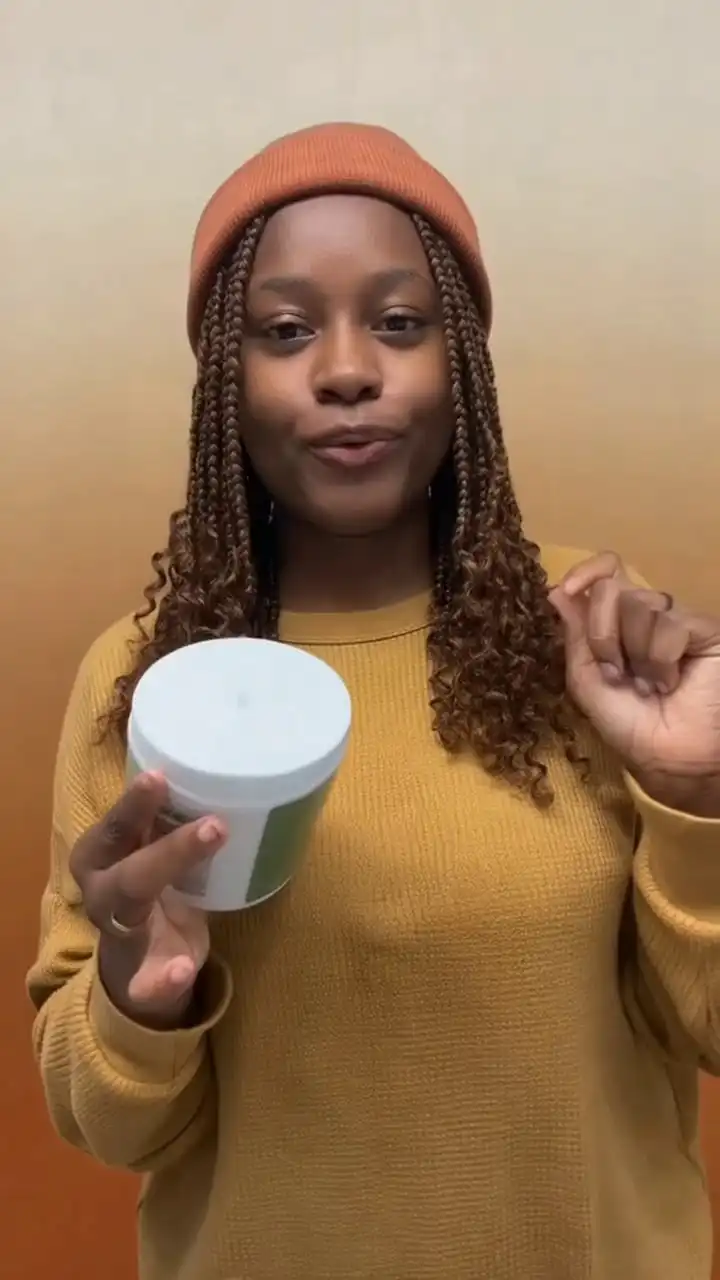

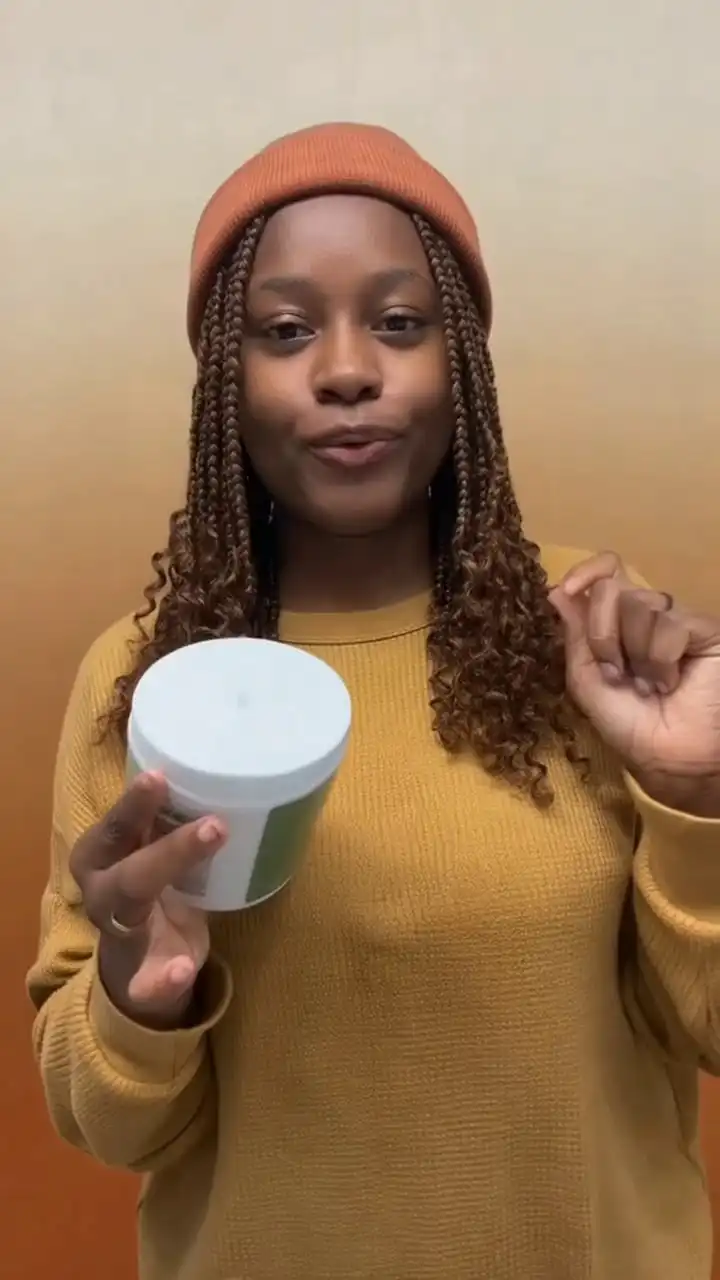

Product Model

ModelYour product photo becomes a cinematic video. No studio. No crew.

Upload your product photo. Add a voiceover or pose. OmniShow generates a studio-quality video of a real person holding, using, and presenting your product — no filming required.

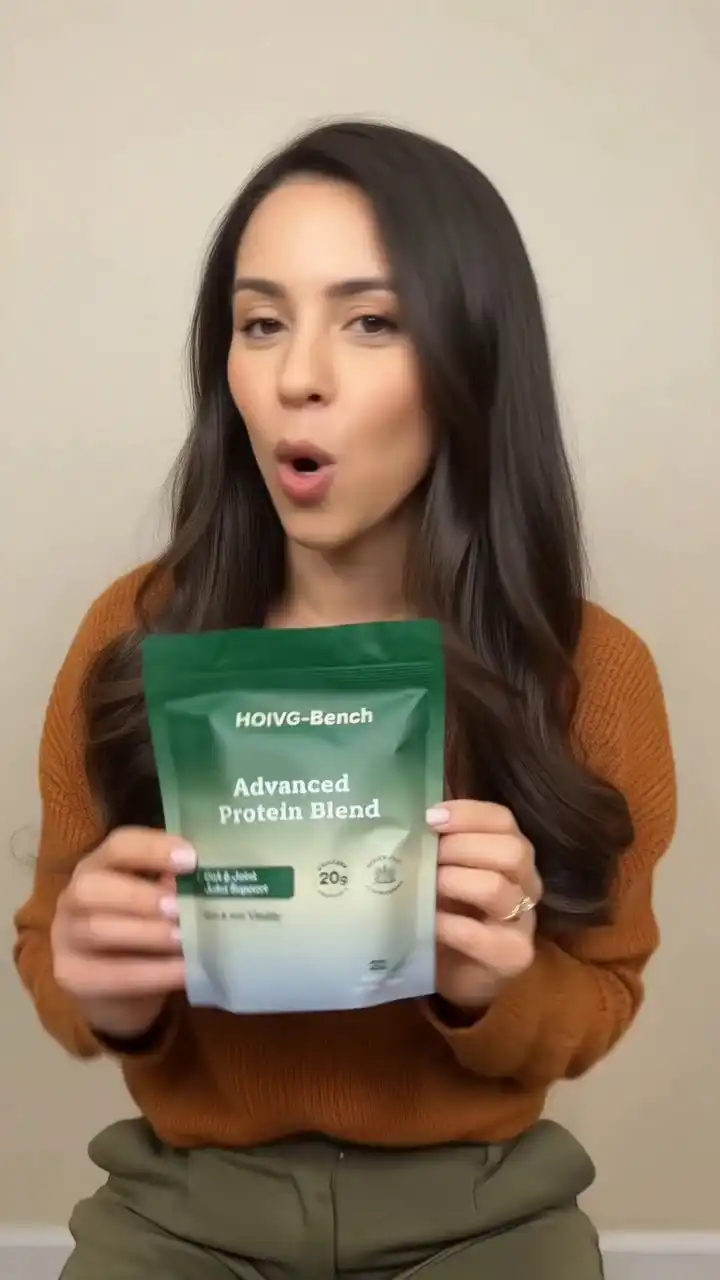

OmniShow is an end-to-end AI video generator for human-object interaction video generation that accepts up to four input conditions — text, reference image, audio, and pose — and synthesizes high-quality HOI video from any combination. It's the only platform purpose-built for HOIVG and independently validated on HOIVG-Bench.

Human-object interaction means making a hand genuinely hold something: stable grip, natural contact, accurate weight response. Most AI video tools fake it. OmniShow was built specifically to get it right.

Diverse, realistic, dynamic — generated entirely by OmniShow.

Every clip below is AI-generated. No filming. No editing. No production team.

OmniShow handles human-object interaction video generation across four input modalities. Use one or combine all four — the model adapts, no retraining required.

Upload a product photo and a model reference image. OmniShow holds color, texture, and shape consistent across every frame — no drift, no distortion, no 3D setup.

Product

Product Model

ModelAdd a voiceover MP3. OmniShow syncs lip movements, facial expressions, and gestures to the audio — frame by frame, in one pass. No manual sync. No dubbing.

Product

Product Model

ModelProvide a pose sequence or video reference. OmniShow follows the defined motion — hand position, body angle, interaction path — while keeping product contact natural throughout. No motion capture rig required.

Product

Product Model

ModelEvery input combined — text, reference image, audio, and pose sequence — processed together in a single generation. No stitching, no separate passes, no consistency loss between stages.

Product

Product Model

ModelIncluded in every generation, across all four modes.

OmniShow generates up to 10 seconds in a single pass — no cuts, no frame-joining, no stitching artifacts. Long enough for a complete product demo from pick-up to placement.

Hands hold, grip, and interact with products the way they actually do — stable contact, natural finger wrap, realistic weight. No clipping, no floating, no mesh errors.

Face, hair, outfit, and proportions stay identical from the first frame to the last. Define the character once — OmniShow keeps them locked for the full clip.

Upload a portrait and an audio track. OmniShow generates a talking or singing avatar with accurate lip sync, natural facial expression, and consistent identity — no animation experience required.

OmniShow is validated on HOIVG-Bench — the first benchmark designed specifically to measure human-object interaction video generation quality across four dimensions: visual fidelity, motion naturalness, identity consistency, and condition alignment.

Across all four dimensions, OmniShow outperforms every baseline model tested — including HunyuanCustom, HuMo-17B, VACE, Phantom-14B, and AnchorCrafter.

OmniShow ranks #1 across all four generation modes in HOIVG-Bench — the only model evaluated end-to-end for human-object interaction video generation.

| Model | R2V | RA2V | RP2V | Long-Shot |

|---|---|---|---|---|

| OmniShow | ✓ Best | ✓ Best | ✓ Best | ✓ Up to 10s |

| HunyuanCustom | ⚠ Lower fidelity | ⚠ Lower sync | — | ✗ |

| HuMo-17B | ⚠ Lower fidelity | ⚠ Lower sync | — | ✗ |

| VACE | ⚠ Lower fidelity | — | ⚠ Lower adherence | ✗ |

| Phantom-14B | ⚠ Lower fidelity | — | — | ✗ |

| AnchorCrafter | — | — | ⚠ Lower adherence | ✗ |

Most AI video tools generate motion. OmniShow generates interaction — and that difference shows up clearly in a side-by-side.

| Capability | OmniShow | HeyGen | Kling 3.0 | Runway Gen-4.5 | Seedance 2.0 |

|---|---|---|---|---|---|

| Person holding & using your product | ✅ Purpose-built | ⚠️ Avatar only | ⚠️ General motion | ❌ Not addressed | ⚠️ General motion |

| All 4 inputs at once (text · image · audio · pose) | ✅ All four | ⚠️ 2 of 4 | ⚠️ 3 of 4 (no pose) | ⚠️ 3 of 4 (no pose) | ⚠️ 3 of 4 (no pose) |

| Stable hand & product contact | ✅ Frame-locked | ⚠️ Avatar hands only | ⚠️ Inconsistent | ❌ Not addressed | ❌ Not addressed |

| Clip length | ✅ Up to 10s | ✅ Multi-minute | ✅ Up to 15s | ⚠️ 2–10s native | ✅ Up to 15s |

| Audio lip-sync | ✅ Full body | ✅ Full body | ✅ 5 languages | ⚠️ No native audio | ✅ Native audio |

| Pose / motion control | ✅ Full body pose | ❌ | ⚠️ Ref video only | ⚠️ Camera only | ❌ |

| Product consistency across frames | ✅ Locked | ⚠️ Varies | ⚠️ Varies | ⚠️ Varies | ⚠️ Varies |

No video production experience needed. No creative team required. Just a product photo and a few minutes.

Drop in your product photo and, optionally, a human model reference image. OmniShow analyzes color accuracy, surface texture, shape geometry, and proportions — and locks them in for every frame of the output. Supports JPG, PNG, WebP. Works with plain product shots, lifestyle images, and 3D renders.

Add any combination of inputs. OmniShow adapts — one input or all four, no retraining required.

OmniShow processes your video in the cloud and delivers a finished clip — no GPU, no software install required. Preview, download, and publish directly to your platform of choice. Generation time varies by complexity and plan.

OmniShow is built for e-commerce sellers, social commerce brands, creators, marketing teams, and AI researchers.

Stop paying for product video shoots. OmniShow turns any product photo into a cinematic demo — ready for your Amazon listing, A+ Content, or brand storefront. Generate at catalog scale, not shot by shot.

TikTok Shop buyers scroll fast. You have 2 seconds. OmniShow generates 9:16 portrait videos that look produced, not generated. Add a voiceover and your model lip-syncs automatically — ready to publish.

Full control over model motion, product interaction, and character dialogue — without a camera, crew, or set. Define the pose, add your audio, and OmniShow handles the physics of the interaction.

OmniShow is fully open-sourced. Access model weights, reproduce HOIVG-Bench results, and build on the framework directly.

★★★★★

"The hand-product interaction in OmniShow clips is the most convincing I've seen from any AI tool. Customers actually comment on how real it looks."

★★★★★

"I can define exactly how the model holds our product and OmniShow nails it every time. The pose control is a game-changer for our creative workflow."

★★★★★

"We replaced our entire video production workflow with OmniShow. 10x the content. 20% of the cost. TikTok Shop Top-500 and growing."

★★★★★

"We shoot zero footage now. Every SKU gets a demo video in minutes. Our Amazon conversion rate went up 34% in the first month."

★★★★★

"The lip-sync quality with RA2V is remarkable. We produce multilingual spokesperson videos for five markets — all from the same reference photo."

★★★★★

"As a researcher, seeing a production-quality HOIVG pipeline this accessible is genuinely impressive. The benchmark results hold up under scrutiny."

Built on peer-reviewed research by ByteDance, CUHK, Monash University, and The University of Hong Kong. Open-sourced on GitHub. Independently validated on HOIVG-Bench — the field's first dedicated benchmark for human-object interaction video generation.

Everything you need to know about OmniShow and human-object interaction video generation.